My ScrapingBee journey

Here at Postman, we have been harvesting publicly available OpenAPI artifacts from GitHub for a couple of months to better understand how people are authoring or generating the API contracts for their APIs. It is pretty straightforward to search for open APIs using the GitHub API, but once we had exhausted what was available, we wanted to explore what was on the open web. The challenge is that not everything on the web has an API (someday it will), making searching, identifying, and harvesting open APIs much more of a challenge. To accomplish what we needed, we realized that we would need a scraping solution, but not just any scraping solution—we needed an API-first scraping solution.

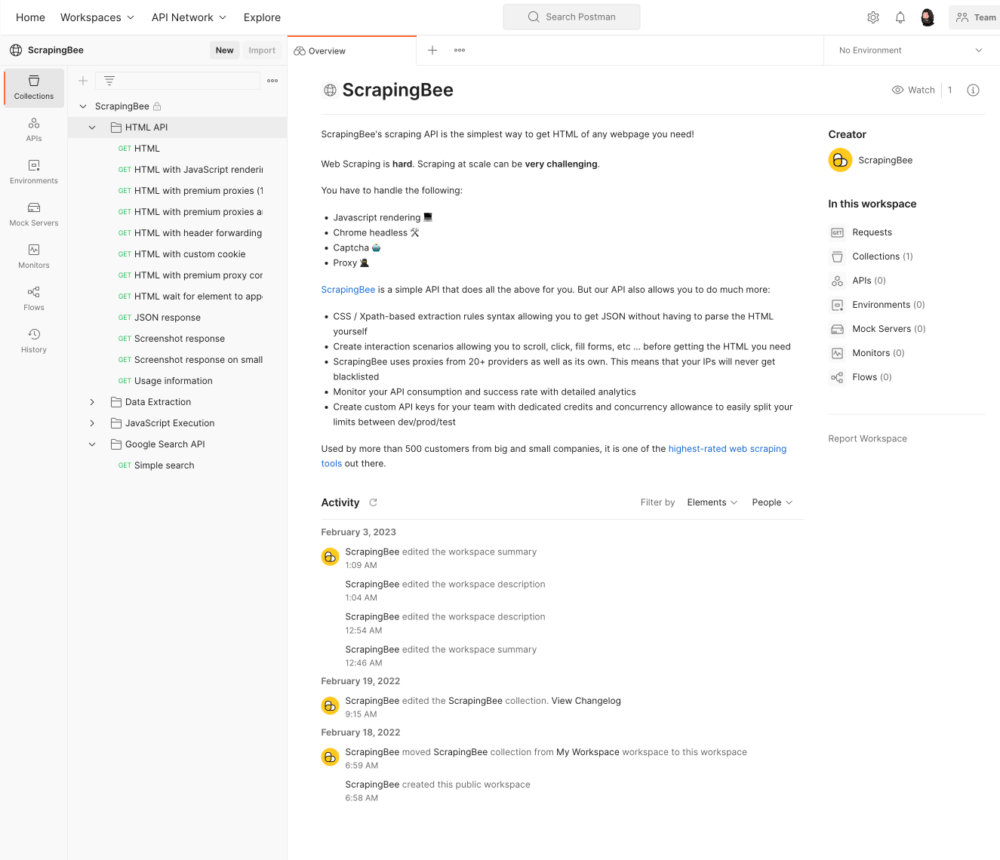

When I need an API solution, where do I go? The Postman Public API Network. So, I pulled up Postman, searched for “scraping,” and began looking through the workspaces and collections available. Pretty quickly, one team’s Postman workspace stood out for me—ScrapingBee. It took me only 30 seconds to acquire an AI and begin making calls with the ScrapingBee API collection.

ScrapingBee doesn’t just provide me with a reference collection for their API—they really do the work to ensure I’m learning how to use their API to accomplish what I need. For example, they provide variations of API requests that work for many of the common use cases for scraping data from web pages.

The Postman Open Technologies team is on a mission to find all of the OpenAPI files that exist behind SwaggerUI and other JavaScript-enabled documentation solutions. If you’ve ever had to programmatically navigate JavaScript applications, you know this isn’t straightforward. With ScrapingBee, the second example of how to use their API provides JavaScript rendering. I put in the URL for a known API documentation page I had handy and—boom!—It returned the rendered HTML. All I had to do was parse all the URLs from the page and identify which one was the OpenAPI artifact I needed.

Within five minutes, I had found a solution to what I needed to accomplish—something that I assumed would take me hours or days. Now, I just needed to find a way that I could easily search for APIs on the open web that used SwaggerUI, and just as I was leaving my ScrapingBee collection to head back to the Postman API Network, I noticed a Google Search API folder at the bottom of the collection…what was this?

Yep, ScrapingBee has a Google Search folder with a single request in it—simple search. If you work in the API world, you know that there is no Google Search API, and I was preparing to use the Bing Search API to get what I needed. But I ended up finding the solution right in front of me, so I thought I’d take a moment to see if it would provide what I needed.

My API key was already in my environment, so I put in “location API SwaggerUI” and hit send on my Postman request—it returned 100 results for pages that potentially had an OpenAPI present. All I had to do was take the 100 URLs and pass them into the HTML with the JavaScript rendering API endpoint I had played with before, and parse my OpenAPI files from the results. That was it. I had found the scraping solution I needed in less than 15 minutes. Impressive.

Ultimately, I have to look through about 2,000 search results and 40,000 resulting links within those results to find about 100 valid OpenAPI files, and the pricing of ScrapingBee works out. I was able to write my search and harvest the collection to do this work in about two hours. With all said and done, I’d say it took me about 2.5 hours from finding ScrapingBee in the Postman API Network to having my collection scheduled to run every five minutes using a Postman monitor. I am back to working on other things, watching my OpenAPI files triple every couple of hours. This allows us to not only find new and interesting APIs to tune into, but also learn from how they’re shaping their APIs by studying their OpenAPI definitions. Over the next months, you’ll see us releasing new findings from this work and sharing what we learn from across the API landscape.

What do you think about this topic? Tell us in a comment below.