Looping through a Data File in the Postman Collection Runner

Update, January 2020: Want to see how the Postman Collection Runner has evolved even further? Read our more recent blog post about Postman product improvements.

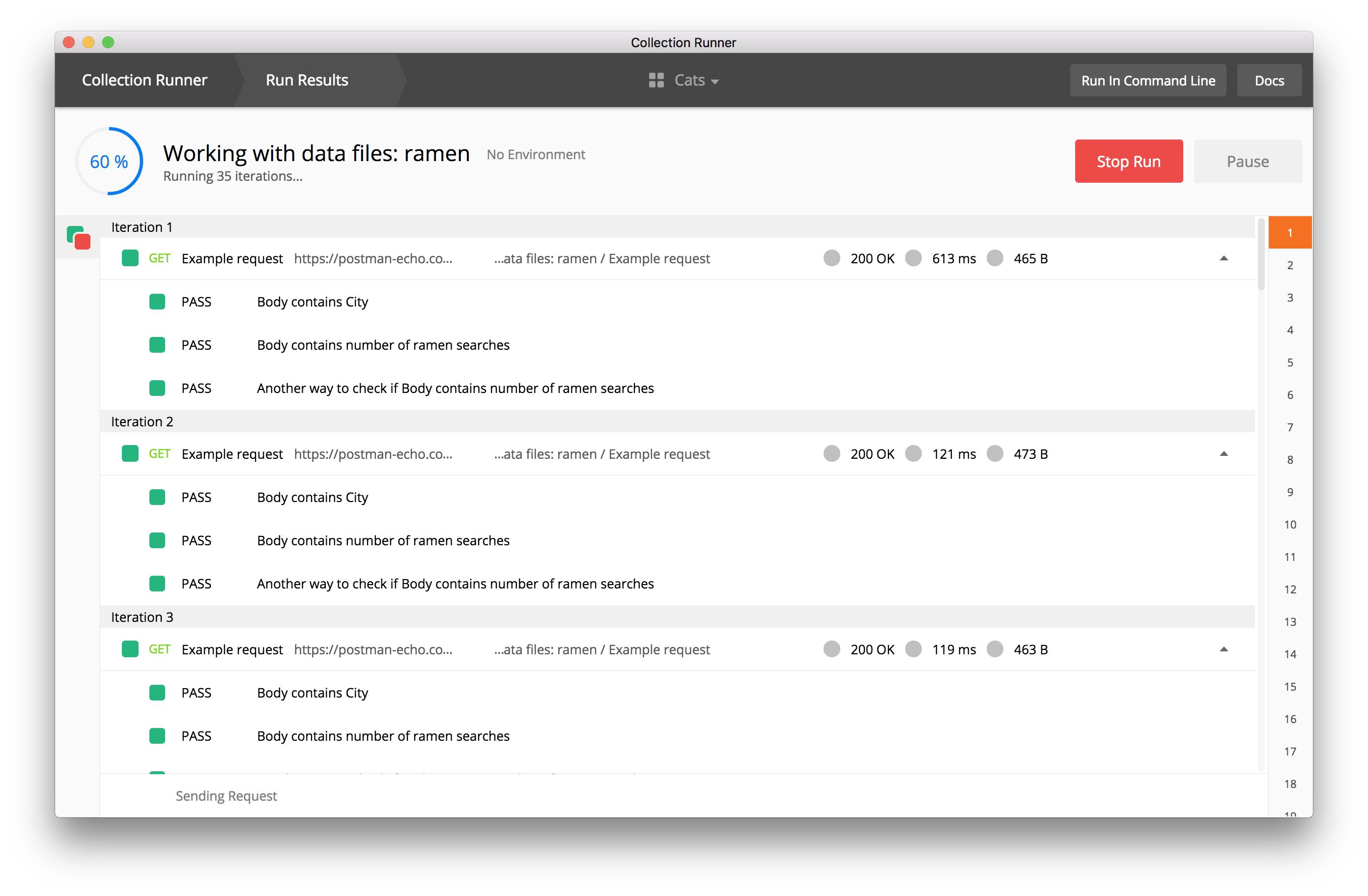

Postman’s Collection Runner lets you run all the requests inside a collection locally in the Postman app. It also runs API tests and generates reports so that you can measure the performance of your tests.

If you upload a data file to the collection runner, you can:

- Test for hundreds of scenarios

- Initialize a database

- Streamline setup or teardown for testing

Let’s start with the basics.

Run a collection with the Collection Runner

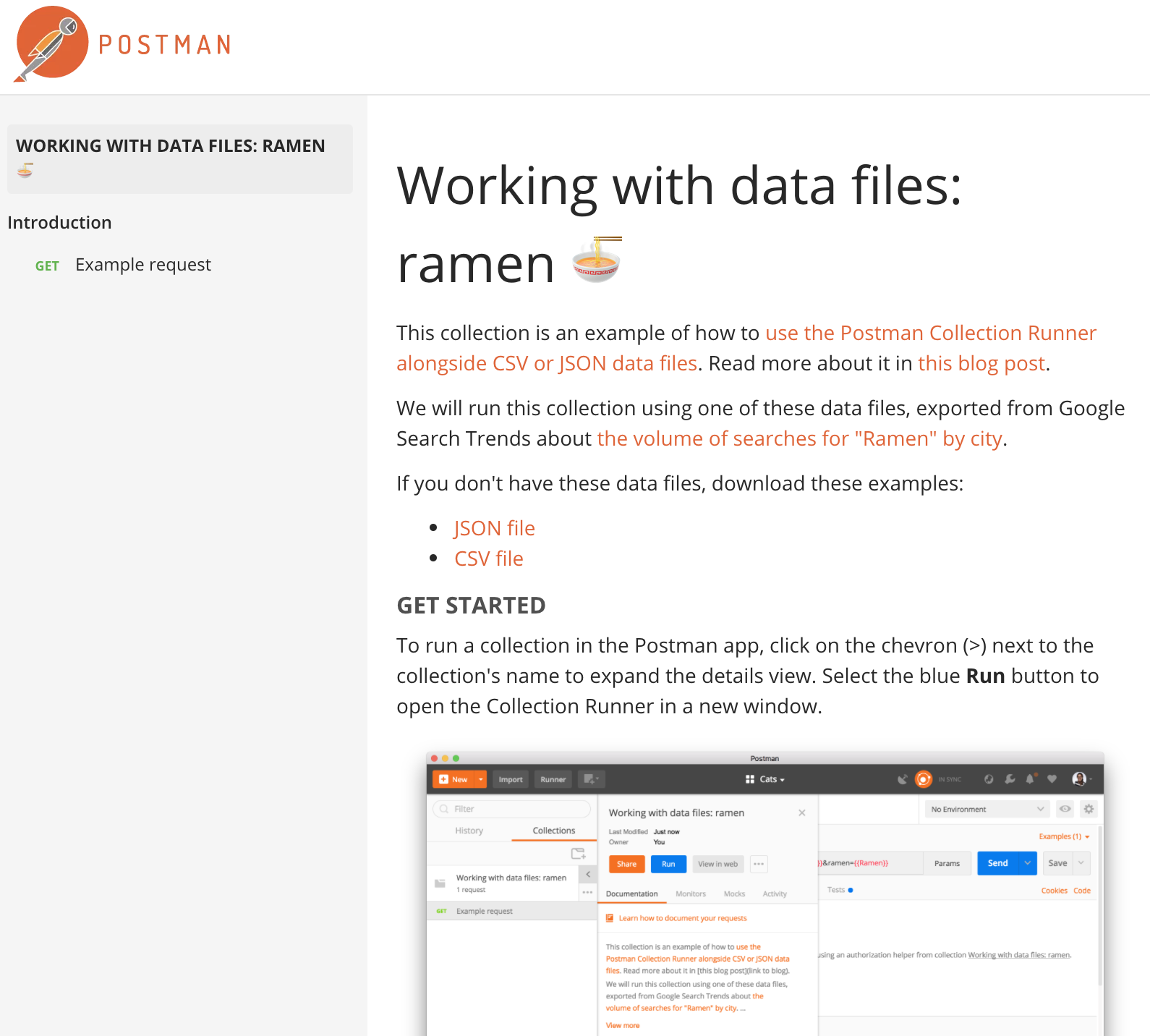

To run a collection in the Postman app, click on the chevron (>) next to the collection’s name to expand the details view. Select the blue Run button to open the Collection Runner in a new window.

Verify the collection and environment if you’re using one, and hit the blue Run button. You’ll see the collection requests running in sequence and the results of your tests if you’ve written any.

Use data variables

What if you want to loop through data from a data file? This would allow you to test for hundreds of scenarios.

In the Postman app, you can import a CSV or JSON file, and use the values from the data file in your requests and scripts. You can do this using data variables with a similar syntax as we do when using environment and global variables.

Using data variables in requests

Text fields in the Postman app, like the authorization section or parameters data editor, rely on string substitution to resolve variables. Therefore, you should use the double curly braces syntax like {{variable-name}} in the text fields.

Using data variables in scripts

The pre-request and test script sections of the Postman app rely on JavaScript (not text). Therefore, you can use Postman’s pm.* API, like the pm.iterationData.get(“variable-name”) method, to access the values loaded from the data file.

Use data files

Using a CSV file

The CSV file should be formatted so that the first row contains the variable names that you want to use inside the requests. After that, every row will be used as a data row. The line endings of the CSV file must be in the UNIX format. Each row should have the same number of columns.

Using a JSON file

The JSON file should be formatted as an array of key-value pairs. The keys will be the name of the variable with the values the data that is used within the request.

Try it yourself:

Click the orange Run in Postman button above to import this example collection into your local version of the Postman app.

We will run this collection using a data file about my 4th favorite type of Japanese food: ramen. The data file can be exported from Google Search Trends about the volume of searches for “Ramen” by city. Download one or both of these sample data files, and give it a try.

Hi,

How to loops through array without using data files from collection runner?

I have my environment variable array IDs = [‘123, ‘234, ‘345’] (assume this is very large array)

I want to run each GET “/get/id={{id}}” with each id inside array without uploading data files? Is that possible that I can use some script to generate each environment variable “id” on pre-request script? Thanks

FYI,

Array environment variable was get from previous GET request.

Then I want to use another GET to loops thru all those IDs to validate it

Hi, Can we start from a particular row of a data file. I mean i have 5 rows in my data file and i want that my script starts picking up the data from 3rd row. So is it possible to do that in postman

You may be able to do that using pre-request and test scripts, if you’d like help with it please post on the Postman community forum: community.postman.com

I ran postman runner 37,700 iterations and the report shows only 24k .

Have you seen this before? I have a test in place that lets me know if the response was success or not. It shows 22k success and 2k failed. Math does not add up here..

I have a request body like

{

“BookId”: “1234”

“Version” : “34”

“Engagement”:

{

“Id”: “51”,

“Name” : “CCC”

},

“Modules”: [

{

“Module1Id”: “45”,

“Pages”: “4”,

“Startchapter”: “AS”,

“Endchapter”: “DF”

},

{

“Module2Id”: “5”,

“Pages”: “14”,

“Startchapter”: “AFS”,

“Endchapter”: “DFS”

},

{

“Module3Id”: “35”,

“Pages”: “154”,

“Startchapter”: “AFCS”,

“Endchapter”: “DFRS”

}

],

“Data”: {

“Id”: “67”

“Code” : “7”

}

}

I am reading the module details from an external data file (CSV file).

For some cases I have to send request body with 2 Module details , sometimes 1 Module details and sometimes all the 3 Module details.

How do I write the automation script in postman to handle this that if my CSV file has a value null/empty for module Id, it won’t send that particular module details.

Hey there! The community forum would be a better place for that question: community.postman.com.

How to use postman/scripting to pull the endpoints and see what the data looks like and use the formatting for the loop that checks counts.

I have had a few examples where I have ran a runner with 20K rows, but the runner runs well past 20K records. When I scroll down in the results, I can see the runner is actually processing empty rows from the CSV files. Is there a way to ensure only populated records are processed?

I have been trying to make posts to Workplace by Facebook. I have only found the \r\n as the line enter code. Are there any other codes that are there for text formatting like making the text within the post(body of the postman request) bold or italicised or even making part of the text as header and the remaining as body.

The rendering of your post depends on how the Workplace API interprets the message. In this case, Postman is just the vehicle to send information.

Hi,

I’m working on a project with multiple collections. Is it possible to use a single CSV file and assign a particular sheet for each collection?

Hi,

How to loops through array without using data files from collection runner?

I have my environment variable array IDs = [‘123, ‘234, ‘345’] (assume this is very large array)

I want to run each GET “/get/id={{id}}” with each id inside array without uploading data files? Is that possible that I can use some script to generate each environment variable “id” on pre-request script? Thanks

is there a way to save the data set for a particular collection so i can run it always instead importing it for every run?

It would be helpful if you can also share a way to run from CLI (newman) with datasets. if it can be done.

You can use `-d` flag to include a data file: https://github.com/postmanlabs/newman#newman-run-collection-file-source-options

Hello, my data has strings containing comma separator. How do I escape the separator comma in the data for Postman to interpret it as part of string not as separator?

Like:

Name, Profession

Stewart, Patrick, Movies, Stage

can someone share if they have knowledge on how to set the data sheet in the Grunt file to setup jenkins build?

this is what i have, i tried data, dataiteration but its not working.

grunt.registerTask(‘apitests’, function(TestSuite, Environment, DataSheet) {

var newman = require(‘newman’);

var done = this.async();

newman.run({

collection: require(“./tests/” + TestSuite + “.postman_collection.json”),

environment: require(“./data/” + Environment + “.postman_environment.json”),

dataiteration: require(“./data/” + DataSheet + “.csv”),

How to automate different API Methods(POST, GET…) by adding data externally in a single CSV file?

Hi, how to run range of test data of a csv file. For example if csv file has 10 rows (test data), how can I run only 1 through 5 rows of test data from collection runner?

Nice feature. It would be fine, if there will be possibility to write something into the source CSV depending on the API response or test. For example I have CSV/JSON with order IDs and after collection run it would be nice to have an updated column/json the key “imported = true”, etc.

Why would my Start Run button in the collection runner be disabled?

How can i pass data file via postman CLI ? Is there any command?

can same be done for post

The variables in this method force you to add your variable as a URL parameter to the endpoint URL. From your example..

?city={{city}}

I need my URL to include only the variable and not a parameter

So whereas your example gives

https://www.postman-echo.com/get?city={{city}}

my endpoint/scenario requires a format like this

https://www.postman-echo.com/get/{{city}}

without any type of URL parameter, where the city would actually be the only part needed in the URL (in my specific case, the city is an ID of a specific record)

I can’t seem to find anyone with this problem in the community. How can I run a variable in a loop without parameters? this is super limiting for me and seems kind of strange that there’s no use case for this? My specific use case is for a massively popular global SaaS product, so, it’s not like its super niche. I’ve tried just typing in {{variable}} without adding it to the variables list, entering it at the collection and individual request level, and it seems to only pick up a blank space and not actually read the data file when I do any of those.

Any ideas?

Hi, Please contact our support team at https://www.postman.com/support and they’ll be glad to help you.

I do not see a blue Run button anywhere in Postman. Is this a restricted feature?

Please contact our support team at http://www.postman.com/support and they’ll be able to help you.

Hi, if I want to recycle to read the csv file, such as if csv has 2 line, and iterationCount set to 4, so any way to recycle from 1st line after EOF of the file?

as usual only half of an answer requiring me to hopefully find the full answer outside of postman in the wild. just another strike against this product.